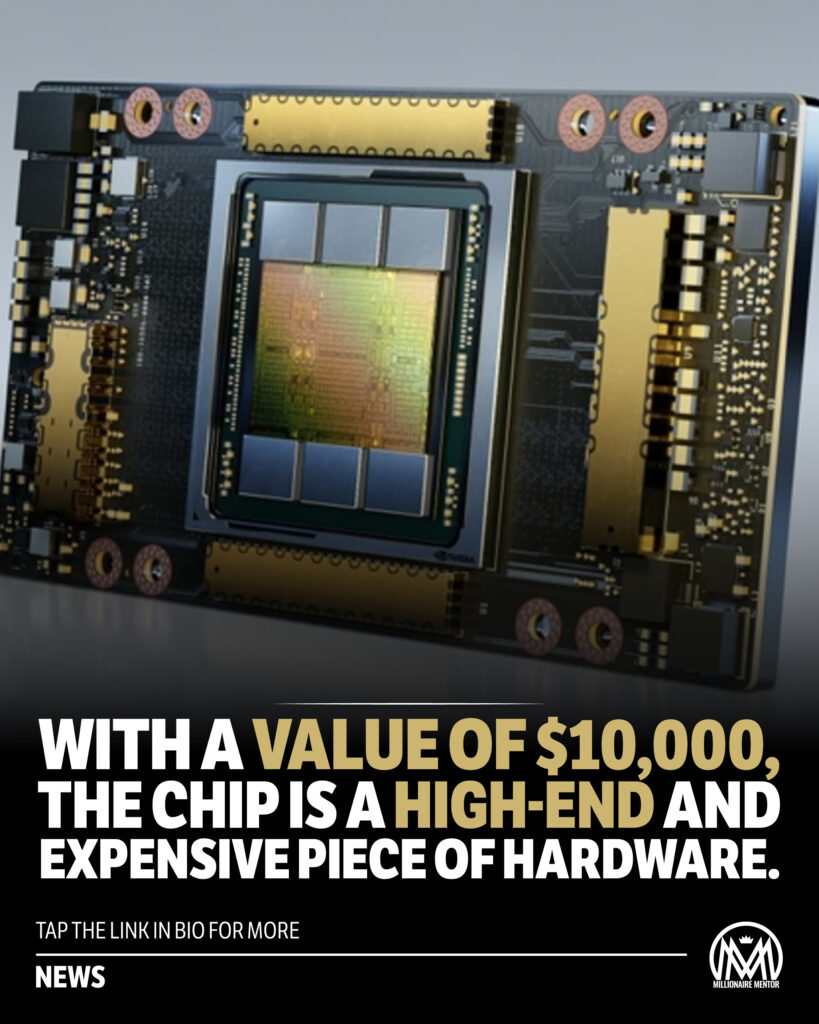

Nvidia, the American technology company known for designing graphics processing units (GPUs), has released its newest and most powerful GPU yet – the Nvidia chip A100. Priced at a whopping $10,000, the A100 is a testament to Nvidia’s commitment to driving the race for artificial intelligence (AI) forward.

The A100 is part of Nvidia chip Ampere architecture, which is designed specifically for AI workloads. It boasts an impressive 54 billion transistors and is built using a cutting-edge 7-nanometer manufacturing process, making it one of the most powerful and energy-efficient GPUs on the market.

The A100 is optimized for AI training and inference, which are two critical components of the AI development process. Training involves feeding large amounts of data into an AI model to enable it to learn and improve over time. Inference, on the other hand, involves using an AI model to make predictions or decisions based on new data. Both tasks require a tremendous amount of computing power, which is where the (Nvidia chip) A100 comes in.

One of the key features of the Nvidia chip is its Tensor Cores, which are specialized processing units that can perform complex mathematical operations required for AI workloads at lightning-fast speeds. The Nvidia chip has a total of 6912 Tensor Cores, which is more than twice the number found in its predecessor, the Nvidia V100.

The A100 also features Multi-Instance GPU (MIG) technology, which allows it to be divided into smaller, isolated GPU instances that can be used to run different workloads simultaneously. Nvidia chip is particularly useful for large organizations that need to run multiple AI projects simultaneously, as it allows them to allocate resources more efficiently and reduce costs.

In addition to its raw computing power, the A100 also benefits from Nvidia’s software stack, which includes libraries and frameworks specifically designed for AI development. These tools make it easier for developers to build and optimize AI models, reducing the time and resources required to bring new AI applications to market.

The A100 has already found its way into some of the world’s most powerful supercomputers, including the recently announced Fugaku system in Japan and the Summit system in the United States. These supercomputers are used for a wide range of applications, from climate modeling to drug discovery, and the A100’s performance is critical to their success.

The A100’s impact, however, extends far beyond the realm of supercomputers. Its high performance and energy efficiency make it an ideal choice for AI applications in industries such as healthcare, finance, and transportation, where the ability to process large amounts of data quickly and accurately is essential.

The A100 is also an important development in the race for AI supremacy, which is currently dominated by large tech companies such as Google, Microsoft, and Amazon. Nvidia’s focus on AI-specific hardware and software has allowed it to carve out a niche in this highly competitive market, and the A100 is poised to cement its position as a leader in AI computing.

The Nvidia A100 is a game-changer in the world of AI computing. Its raw computing power, energy efficiency, and AI-specific features make it an ideal choice for a wide range of AI applications, from supercomputing to industry-specific use cases. While the A100’s price tag may put it out of reach for many organizations, its impact on the AI landscape is sure to be felt for years to come.

Trending News Articles

Microsoft and Paige partner to create world’s largest AI model for cancer detection: ‘Unprecedented scale’.by Jason Stone●September 11, 2023

Microsoft and Paige partner to create world’s largest AI model for cancer detection: ‘Unprecedented scale’.by Jason Stone●September 11, 2023 Meta is reportedly considering further layoffs that could be on the same scale as last year’s.by Jason Stone●March 11, 2023

Meta is reportedly considering further layoffs that could be on the same scale as last year’s.by Jason Stone●March 11, 2023 Times have changed haha 😂 – Millionaire M…by Jason Stone●March 16, 2023

Times have changed haha 😂 – Millionaire M…by Jason Stone●March 16, 2023 Using viral content and giving it a “twist…by Jason Stone●May 10, 2023

Using viral content and giving it a “twist…by Jason Stone●May 10, 2023